Business Analytics Series – Part 3 of 3: Effectively Implement your BA Solution

Contributed by Daniel Ko, Research Manager, Info-Tech Research Group

In the second blog, we explored how you build a team around you to launch your Business Analytics solution with an effective business case. If all went well, you garnered support for the project, selected a vendor, and are now ready for the final step that will truly define the success of the initiative: implementation.

Far too often this critical step is seen as just a final small hurdle to making the “magic” happen with the new whiz-bang technology that was purchased. That perspective gets a lot of people I speak to in trouble (and I often only hear of it after the first attempt has been botched). There are a variety of reasons that implementations go wrong, but by far the most common is that organizations try to do too much, too fast, and the deployment doesn’t meet anyone’s needs.

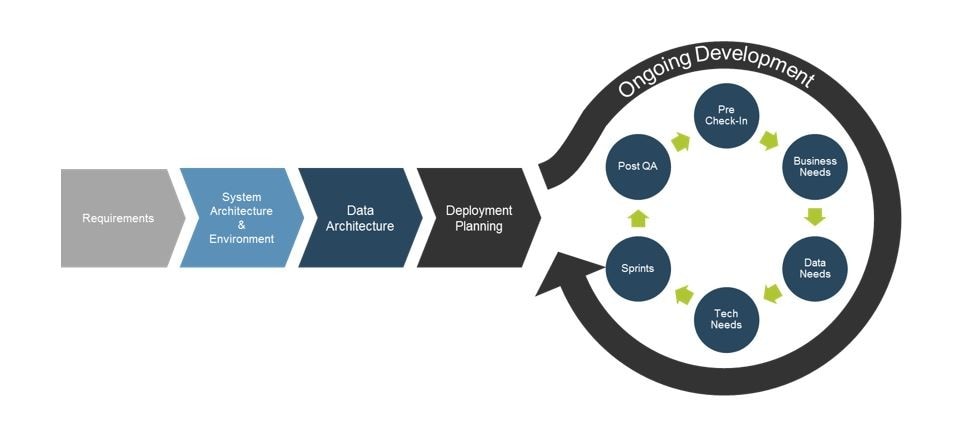

If only I had the opportunity to advise them before they started, I would have recommended a more measured, paced approach to realizing value from their Analytics solution. There are some key steps that must be followed to ensure a successful implementation: Requirements Gathering, Evaluating your System and Data Architecture, and Deployment planning. Where most organizations miss the mark, though, is understanding that with Analytics “go-live” isn’t a single launch date, but rather an iterative process of ongoing development and improvement.

Requirements

Not every project can, or should, be 100% agile, and with this type of technology, it’s often best to start with a waterfall approach to development before getting into a full-on agile approach.

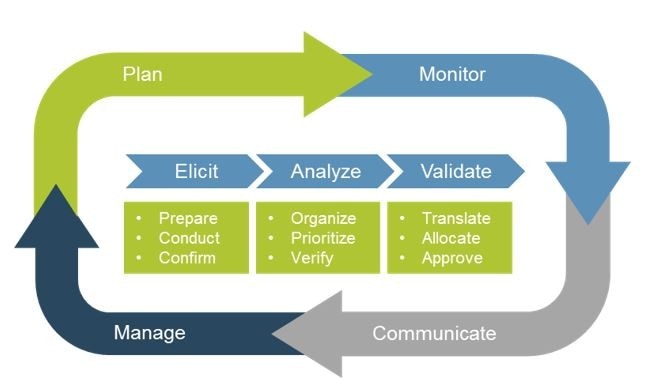

With that in mind, you want to solicit detailed business requirements from the functional units. IT will be responsible for detailing the technical limitations or constraints, and the Business Analyst we talked about in the last blog will be critical in reconciling the business and technical sides. By doing so, you’ll have a deeper, more research-focused idea of your needs and you’re less likely to have to go back and forth on various elements of your deployment.

System Architecture and Environment

Gathering requirements will help shape this next step – it will give you insight into your deployment model for starters. Are you going to go with an on-premise solution? A cloud solution? Or maybe a hybrid solution? There are a wide range of options so having elicited and verified your requirements in advance will definitely help you with this step.

Once you’ve evaluated your deployment model, you can go forth and develop the environment and system architecture.

What you want to look at is:

1. Failover, and backup and recovery: How will you make sure you don’t lose your information when you’re migrating your data? Is the storage going to be tiered? Cloud-based or SAN/NAS? Will the failover be hot, warm or cold? Automated, heartbeat, or manual? The best recovery strategy is the one you never have to use, but if things do go wrong, you’ll be glad you thought it through.

2. Configuration: Don’t expect an out-of-the-box solution that you install and run. Your server architecture (Windows/Linux, virtual/physical), databases and connections (SQL/ODB/DB2, SOA or other Middleware), and other factors will influence how you are able to deploy and configure your solution, not the other way around!

3. Patches, Features, and Module Management: Unless you have a critical dependency that requires down-versioning, be sure that you are deploying the most recent version to ensure all security and functionality updates are present. It is also important to have a roadmap and policy in place for when and how frequently you will install new updates (immediately on release, n -1 to avoid release bugs, etc).

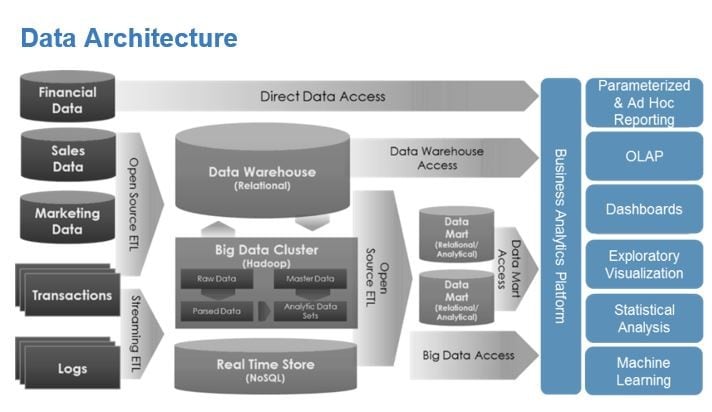

Data Architecture

A typical architecture will have multiple data feeds, some sort of ETL/ELT, data marts, stores, and possibly a warehouse, and any number of reporting and visualization tools drawing on them. It’s not imperative that your architecture be identical to the example graphic, but that however yours is set up, you are able to trace and map your inputs, storage, processing, and outputs. Analytics will deliver the most value when you expose the solution to as much of that available data as possible, and you can only do that if you know where it is. Some other elements you want to consider are: interoperable data integration, metadata management, content management, data operations, and data development.

Deployment and Planning

There are a number of elements you want to consider when deploying your solution to ensure that there is buy-in, goals are met, and the solution is deployed correctly. Four key items that you will want to have thoroughly documented are: a migration plan, rollback plan, training plan, and support plan. Once you’ve deployed the solution make sure you track your metrics to evaluate if the BA solution is doing what you needed it to do. Also, have a post-implementation review (PIR): I have found in my years of experience there are ALWAYS lessons to be learned from an implementation, even if deployed correctly.

It may seem like a simple idea to Pilot the technology rather than going “big bang”; however, organizations get caught up on the excitement of the perceived value they will achieve with analytics and forget the basic principles of software deployment!

A good pilot project for an Analytics solution will have the following traits:

1.Low visibility: You don’t want this to fail in front of a lot of people who need something urgently. Delivering at or above expectation, even for a lower profile project, will build much needed momentum and internal champions for future additions

2.High data quality: Insights driven through analytics are only going to be valuable if the data behind them is good. Pick data sources where you know the data is accurate and up to date.

3.Low complexity: Look for an opportunity to deliver tangible, defined value that can be easily understood by the business stakeholders. If it takes an advanced degree in math or statistics to understand how Analytics provided benefit, then it’s not right for a pilot!

About the Author:

Daniel Ko has been in the information management field for over 10 years and he has a solid understanding of BI, BI strategies, analytics, data integration, ETL, data quality, data hygiene, data architecture, data warehousing, datamarts, GIS (Geographic Information Systems), Big Data, and Master Data Management (MDM). He has assumed the roles of Subject Matter Expert (SME), Project Technical Lead, Project/BI/Data Warehouse Architect, Project Assessor, Data Analyst, and Business Analyst in various projects and positions.

Prior to joining Info-Tech Research Group, Daniel worked at Project X Consulting as a Senior Technical Consultant and helped telecommunication, insurance, and healthcare clients on their information projects. Formerly he served as a BI Team Lead at Adesa, coaching the BI team and helping external and internal clients to leverage data and data management technologies. Earlier in his career, Daniel worked as a Data Warehouse Analyst at TELUS Mobility and enabled the business to uncover knowledge and insights. Daniel also worked in the automotive and utility industries.

Daniel received his B. Sc. from the University of Toronto. His major is Molecular Genetics with a number of courses in GIS (Geographic Information Systems).

Daniel is MicroStrategy CPD, CDD, CRD, and Oracle 9i DBA-OCP certified. Daniel is also a certified PMP.

Read More

Are you getting the most out of your data?

Register now for Info-Tech webinar to learn more.

Book Excerpt

- Read the first chapter of Heuristics in Analytics: A Practical Perspective of What Influences Our Analytical World.